In a transformative shift driven by the escalating global race to build artificial intelligence, the fundamental way computers are constructed is undergoing a profound alteration.

The Rise of the GPU

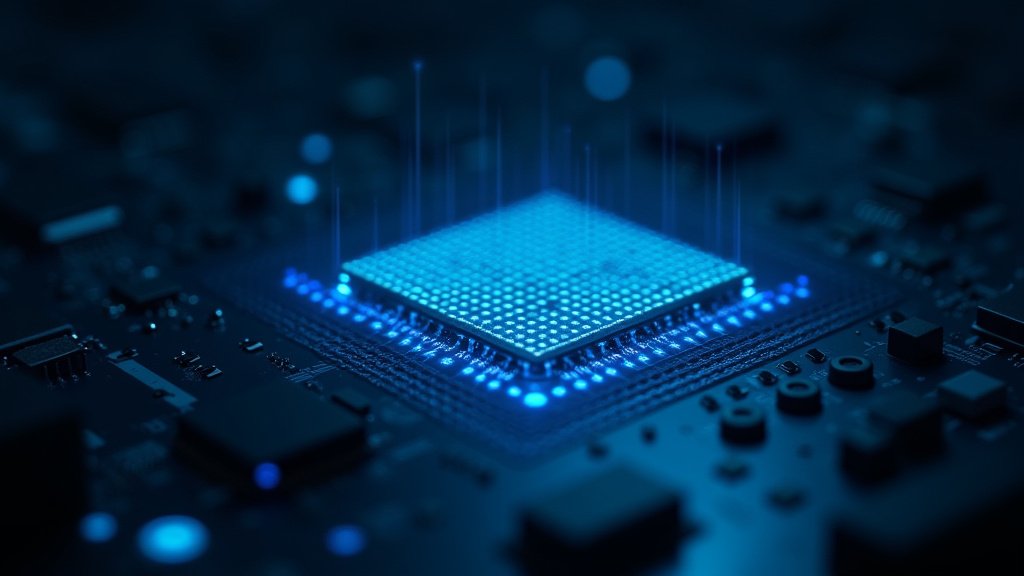

At the heart of this paradigm change lies the graphic processing unit, or GPU. Originally conceived and developed primarily for rendering complex graphics in video games, GPUs possess a unique architecture highly adept at performing numerous calculations simultaneously. This parallel processing capability makes them exceptionally well-suited for the demanding computational tasks required to power artificial intelligence systems, particularly in areas like machine learning and neural network training.

Leading technology companies are now densely packing these powerful chips into specialized computing systems. This strategic deployment reflects a critical understanding of the computational demands of modern AI, which often requires processing vast datasets and executing intricate algorithms at unprecedented speeds.

Forging a New Class of Supercomputer

The aggregation of these processors is leading directly to the creation of what can be described as a new type of supercomputer. Unlike traditional supercomputers designed for diverse scientific workloads, these emerging machines are purpose-built for AI. They are characterized by an astonishing scale and density, capable of incorporating up to 100,000 chips wired together. This massive interconnectedness is crucial for distributing and accelerating the intensive computational workloads inherent in developing sophisticated AI models.

These colossal computing engines are housed within dedicated facilities known as data centers. While data centers themselves are not a new phenomenon – big tech companies, for instance, have been constructing them worldwide for two decades – their function and internal architecture are evolving dramatically.

Shifting Focus in Data Center Design

For the past twenty years, the global construction of data centers by major technology firms has supported a wide array of digital services, from cloud computing to online storage and traditional data processing. However, the advent of the AI race has necessitated a significant shift in focus.

The emphasis is increasingly moving towards building highly specialized, GPU-accelerated systems specifically optimized for AI computation. These new data centers, or sections within existing ones, are engineered from the ground up to support the power, cooling, and networking requirements of densely packed GPU clusters, a stark contrast to the more general-purpose infrastructure of the past.

This evolution in hardware design underscores the computational intensity of AI development. Training cutting-edge AI models requires computational resources far exceeding those needed for traditional software applications or even general cloud services. The shift towards massive, GPU-driven systems reflects the technological infrastructure being rapidly erected to fuel the next generation of artificial intelligence capabilities.

Implications for the Future

The transformation in computer architecture driven by AI is not merely a technical detail; it represents a fundamental reshaping of the technological landscape. The global pursuit of more powerful and capable AI systems is directly influencing the physical machines that underpin them. This trend is likely to accelerate, potentially leading to further innovations in chip design and data center infrastructure as the demands of AI continue to grow.

The concentration of these powerful, specialized computing resources also highlights the significant investment and infrastructure required to remain competitive in the global AI race. It signifies that the future of artificial intelligence is not just about algorithms and software, but equally about the hardware foundation being laid, chip by chip, within the specialized confines of the modern data center.